Regression Sum of Squares Calculator

Instructions: Use this regression sum of squares calculator to compute \(SS_R\), the sum of squared deviations of predicted values with respect to the mean. Please input the data for the independent variable \((X)\) and the dependent variable (\(Y\)), in the form below:

More about this Regression Sum of Squares Calculator

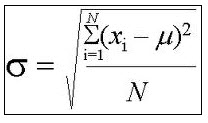

In general terms, a sum of squares it is the sum of squared deviation of a certain sample from its mean. For a simple sample of data \(X_1, X_2, ..., X_n\), the sum of squares (\(SS\)) is simply:

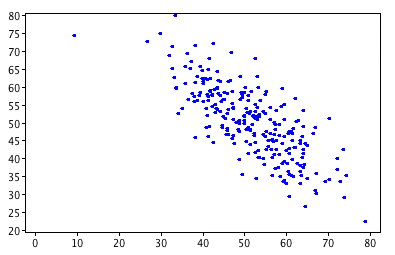

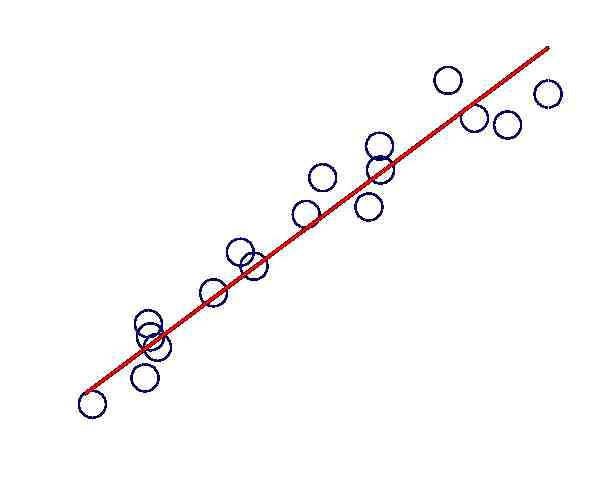

\[ SS = \displaystyle \sum_{i=1}^n (X_i - \bar X)^2 \]So, in the context of a linear regression analysis, what is the meaning of a Regression Sum of Squares? Well, it is quite similar. In this case we have sample data \(\{X_i\}\) and \(\{Y_i\}\), where X is the independent variable and Y is the dependent variable. The regression sum of squares \(SS_R\) is computed as the sum of squared deviation of predicted values \(\hat Y_i\) with respect to the mean \(bar Y\). Mathematically:

\[ SS_R = \displaystyle \sum_{i=1}^n (\hat Y_i - \bar Y)^2 \]A simpler way of computing \(SS_R\), which leads to the same value, is

\[ SS_R = \displaystyle \hat \beta_1 \left( \sum_{i=1}^n X_i Y_i - \frac{1}{n}\left(\sum_{i=1}^n X_i\right)\left(\sum_{i=1}^n Y_i\right) \right)= \hat \beta_1 \times SS_{XY} \]Other Sums of Squares

There are other types of sum of squares. For example, if instead you are interested in the squared deviations of predicted values with respect to observed values, then you should use this residual sum of squares calculator. There is also the cross product sum of squares, \(SS_{XX}\), \(SS_{XY}\) and \(SS_{YY}\).

Other things you can do with these data

So, what else could you do when you have samples \(\{X_i\}\) and \(\{Y_i\}\)? Well, you can compute the correlation coefficient , or you may want to compute the linear regression equation with all the steps .