R Squared to Adjusted R Squared calculator

Instructions: Use this calculator to compute the adjusted R-Squared coefficient from the R-squared coefficient. Please input the R-Square coefficient \((R^2)\), the sample size \((n)\) and the number of predictors (without including the constant), in the form below:

Adjusted R Squared

The Adjusted R Squared coefficient is a correction to the common R-Squared coefficient (also know as coefficient of determination ).

This is particularly useful in the case of multiple regression with many predictors, because in that case, the estimated explained variation is overstated by R-Squared. Using the adjusted R-squared coefficient instead gives a more accurate reflection of the variation in the dependent variable that is explained by the model.

R-Squared to Adjusted R-Squared Formula

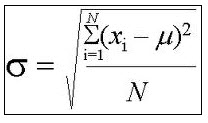

So, how do you convert R-squared to adjusted R-squared? The Adjusted R Squared coefficient is computed using the following formula:

\[\text{Adj. } R^2 = \displaystyle 1 - \frac{(1-R^2)(n-1)}{n-k-1}\]where \(n\) is the sample size, \(k\) is the number of predictors (excluding the constant) and \(R^2\) is the known coefficient of determination we want to adjust.

This solver allows for a R^2 to Adj. Conversion. If you need to estimate a regression model, please use our multiple regression model calculator .

How do you calculate adjusted r2 in Excel?

The formula presented above is very simple to implement in Excel. If you assume that cell A1 = R^2, cell A2 = n and cell A3 = k, the you would use the formula "=1 - (1-A1)*(A2-1)/(A2-A3-1)".

If you are using Excel, the perhaps the easiest way is to run a linear regression procedure, which will report both R^2 and Adj. R^2 along. The disadvantage of using Excel is that you do not get the steps shown, unlike this adj. R^2 calculator that gives you all the steps of the process.

What is the difference between multiple R-squared and adjusted R-squared?

The difference is clear, the multiple R-squared is the regular coefficient of determination for multiple regression, which means this is the unadjusted R^2, which via a correction can lead to the adjusted R-squared.

The mechanism for computing the multiple R-squared or multiple coefficient of determination is interesting, as it involves running a linear regression model , and the finding the regular coefficient of determination between observed and predicted values .