Choice overload isn’t a UX problem; it’s a math problem wearing a friendly interface.

Open https://www.designrush.com/agency/graphic-design/us and you’ll see it instantly: a massive directory, endless filters, and that creeping feeling that “more options” just means “more uncertainty.”

Constraint-based reasoning is the upgrade from scrolling to ruling out, where you state what must be true (budget, timeline, tech fit, style constraints) and let logic prune the universe into a shortlist you can actually choose from.

When “More Choices” Becomes “More Pain”

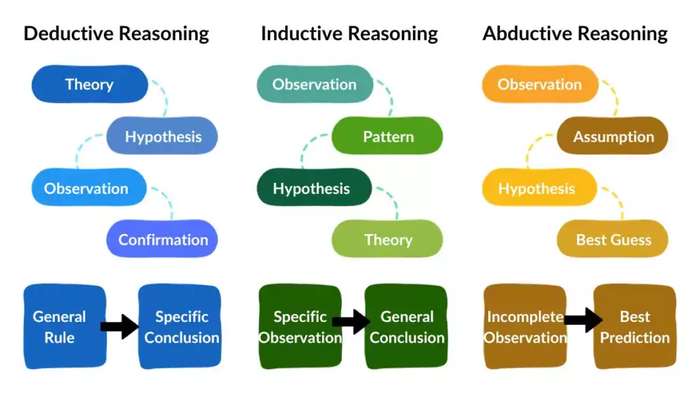

A large option set sounds like freedom until you’re staring at 40,000 combinations and your brain starts buffering. Constraint-based reasoning is the antidote: instead of searching for the right thing, you delete everything that can’t be right, fast.

That shift in mindset is the whole game.

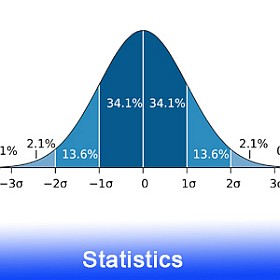

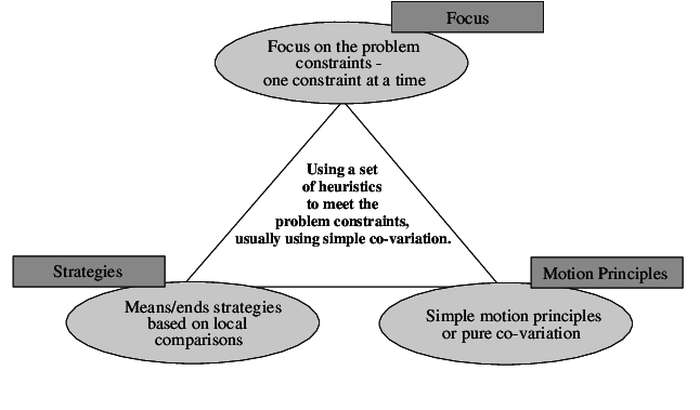

In constraint programming (CP), you describe a problem using decision variables (what can change), domains (allowed values), and constraints (rules that must hold).

Then the solver does what humans wish they could do at 2 a.m.: ruthless, logical elimination through constraint propagation.

Google’s OR-Tools frames CP as a great fit for planning and scheduling problems with heterogeneous constraints, which is basically “real life, but with more spreadsheets.”

The “Filter First, Choose Later” Workflow

Think of constraint-based reasoning as an industrial-strength filter pipeline:

- Declare your universe. All possible options.

- Add constraints. Business rules, preferences, hard limits, “no way, absolutely not.”

- Propagate. The system prunes impossible values before it brute-forces anything.

- Search what’s left. Now the option set is small enough to reason about, optimize, or hand to a human.

That propagation step is where the magic lives.

A solver keeps tightening domains: “If A must be true, then B can’t be 7 anymore,” and so on, until all rational functions lead to a pattern or no solution.

Source: ResearchGate

Source: ResearchGate

Example: Building a “Good Enough” Bundle Out of a Catalog

Say you’re configuring a product bundle (a laptop, a SaaS plan, a travel package, whatever). You’ve got:

- CPU: {i5, i7, Ryzen7},

- RAM: {16GB, 32GB},

- Storage: {512GB, 1TB},

- Delivery date: {Mon…Fri},

- Budget: numeric range,

- Compatibility rules (CPU X requires motherboard Y, plan tier limits feature Z),

- Preferences (“prefer 32GB if budget allows”).

If you treat this as a normal search problem, you’re tempted to generate combinations and check them. That’s like tasting every item in a grocery store to decide on dinner.

With constraints, you instead write rules like:

- total_cost ≤ budget

- if CPU = i7 then storage ≠ 512GB (pretend your i7 configs come only with 1TB)

- delivery_day ∈ {Tue, Wed, Thu}

- must include feature A, cannot include feature B

Propagation will remove values from domains immediately.

That “delivery_day” constraint alone cuts the space by 40%. Compatibility rules usually cut it harder than you expect, because they cascade. Suddenly you’re not choosing from 40,000 bundles. You’re choosing from 37.

Source: Spot

Intelligence

Source: Spot

Intelligence

Why CP Feels Like Cheating: Global Constraints and Propagation

A hidden reason CP is so good at filtering big option sets is that it doesn’t only use tiny, local rules.

It has global constraints: high-level patterns that solvers understand deeply (like “all values must be different,” “these tasks cannot overlap,” and “capacity must not be exceeded”).

Instead of you spelling out thousands of micro-rules, you say, “These meetings can’t overlap,” and the solver brings a whole toolbox of pruning logic.

This is also why CP ends up in scheduling and planning so often.

IBM’s CP Optimizer is designed specifically for constraint satisfaction and optimization, including scheduling-heavy problems.

Example: Scheduling Without the Spreadsheet Rage

Scheduling is where humans most commonly lie to themselves.

You start with: “I’ll just move this meeting.”

Then: “Wait, that breaks the dependency.”

Then: “Why is this person double-booked?”

Then: “I hate time.”

Constraint-based reasoning turns scheduling into a controlled demolition. You model:

- tasks with durations,

- resources (people/machines) with availability,

- precedence constraints (“A before B”),

- capacity constraints (“no more than 2 tasks at once”),

- time windows (“must happen between 10 and 3”).

The solver filters out impossible placements early.

It doesn’t “try everything”; it trims the tree of possibilities as soon as a partial schedule violates a constraint.

With the right constraints, most of the nonsense never gets generated in the first place.

Data Filtering Counts Too

If your “large option set” is a dataset, constraint reasoning can still be your friend.

The Semantic Web world has an entire standard for this: SHACL, a W3C recommendation for validating RDF graphs against constraint “shapes.”

Different domain, same philosophy:

- You define what valid data must look like (required fields, allowed values, patterns).

- Validation filters out records that don’t comply.

- Downstream logic stops tripping over garbage inputs.

In other words, constraints aren’t only for choosing one best option. They’re also for shrinking and cleaning the universe you’re operating in.

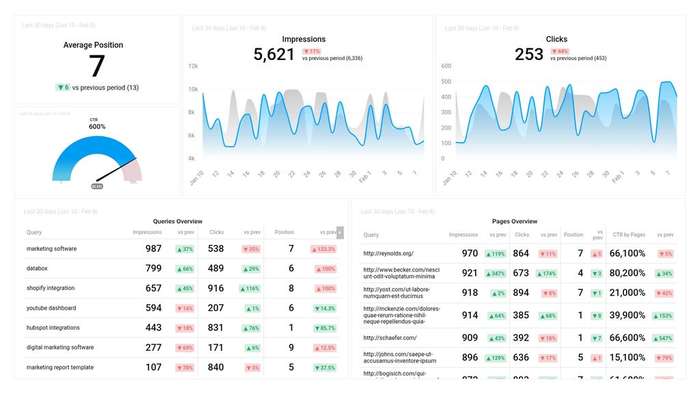

Source: DataBox

Source: DataBox

Modeling Matters More Than the Solver

Tools are great, but the leverage comes from how you express the problem.

MiniZinc exists because people got tired of rewriting the same model for different solvers. It’s a solver-independent constraint modeling language: describe the problem once, then run it on different backends.

That “describe it cleanly” mindset is the real win. If your constraints are vague, you’ll get vague results. If your constraints are sharp, your option set collapses into something a human can actually pick from.

This is where your publisher plug fits naturally: when you’re drafting constraints, you often want quick sanity checks, small toy examples, or a way to test logic without spinning up a whole environment.

Math Cracker (useful tools for math and programming) is perfect for those “does this rule even make sense?” moments, especially when you’re translating business talk into something a solver won’t misinterpret.

Once your model is crisp, the solver is just the engine, and the engine choice matters a lot less than the map you drew. Nail the constraints, and that “too many options” problem turns into a clean, confident yes-or-no pipeline.

In case you have any suggestion, or if you would like to report a broken solver/calculator, please do not hesitate to contact us .